It’s actually rocket science. The Department of Communication Sciences and Disorders’ Skyler Jennings, AuD, PhD is part of a team that’s received research funds from the Air Force for a five-year project. This project studies how the brain talks to the ear and how that communication depends on whether you’re sitting up, lying down—or in space.

The project, “Gravity Dependent Cortical Control of Sensation,” is a collaboration between the Jennings Lab and colleagues at Boston University, Brandeis University, and the Weizmann Institute. These investigators hope to demonstrate that there’s a communication feedback loop between the cortex and inner ear, which controls both hearing and balance.

“The main knowledge we’re trying to establish is the extent to which the brain talks to the ear and how that affects perception,” Jennings said. “We predict the communication between the brain and the ear should depend on your position relative to gravity.”

Hear, Hear: Does the Brain Talk to the Ear?

Most sensations, like taste, touch and sight, are enhanced by the movement of their sensory organs—the tongue, the skin or the eyes. A subsequent connection between the brain and sensory system drives our perception, also known as “active sensing.”

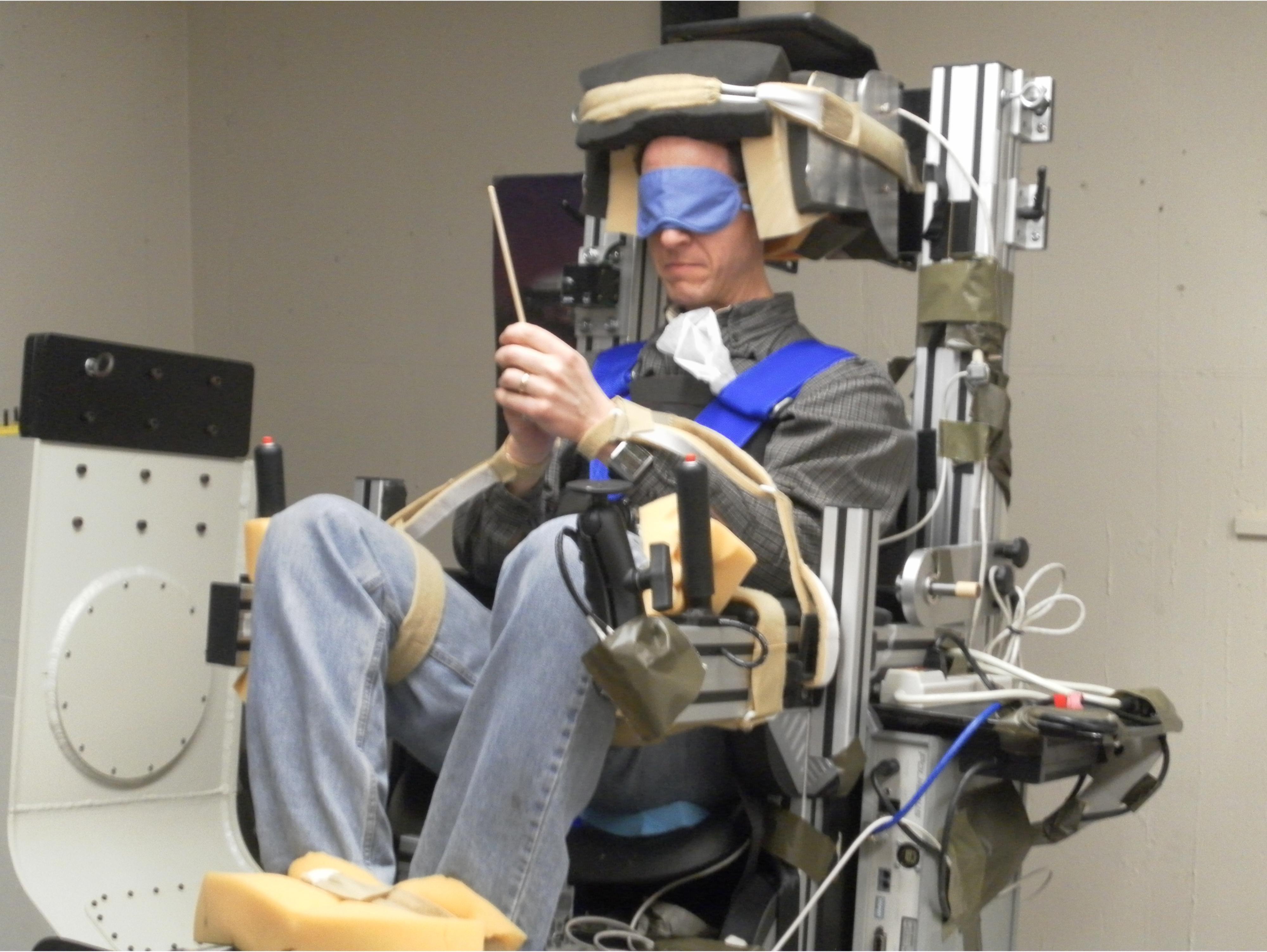

Jennings said that his team would be the first to test the hypothesis that hearing behaves in the same way. They want to determine if the brain tells the inner ear to adjust its response to facilitate a goal that affects both hearing and balance. This knowledge is essential to understand how pilots perform in weightless conditions.

Previous studies have shown that when free-floating pilots or astronauts experience disorienting vision and other contact cues, they lose their direction, which can be dangerous and even deadly. Balance and hearing can be used to help orient pilots during mid-flight crises.

“Our hearing and balance systems combine to form the inner ear—it makes sense that they talk to one another and might influence one another,” Jennings said. “If we can understand more about how the brain communicates with the inner ear’s hearing and balance centers, the Air Force can consider this knowledge when they’re developing communications systems and safety protocols for pilots and astronauts.”

Although Jennings has never researched in the field of balance, his lab is one of the few that have developed a technique to measure the electrical response of the inner ear. His team has created an electrode that can be comfortably placed on the ear drum. The electrode measures the inner ear’s response to conditions when brain communication might be involved.

“We’ve been able to show pretty convincingly that the brain is talking to the inner ear,” he said. “It’s a new area of research for me, but I’m involved in this study because of the technique I’ve developed to measure that communication.”

A Model Approach to Sound Recognition

The rest of the team involved in this research is building a computer model that predicts how the brain interfaces with the ears. This model is one of the project deliverables that the Air Force can use in simulations with pilots. The team’s combined research will help optimize communication in-flight, which is especially beneficial if emergency situations arise.

Jennings is no stranger to computer models—his lab also works on models of the auditory system. They use simulation experiments to determine the acoustic cues, like intensity or frequency, that listeners focus on when making decisions about the sounds they hear. These decisions may involve detecting a sound, discriminating between two sounds, or comprehending speech in a noisy background.

The simulations predict how the human auditory system responds to sound, without using invasive techniques often employed in animal studies, like inserting a needle electrode directly into the inner ear.

“Establishing a relationship between the brain and the ear is really important, especially as we get older and experience hearing loss,” Jennings said. “The normal communication between the brain and ear changes quite a bit and causes us to struggle a lot more in noisy backgrounds. If we can better understand this communication, we can improve the way that devices like hearing aids are used.”

And the application goes beyond hearing aids. It can also apply to speech recognition systems, which currently don’t consider the link between the brain and the ear and struggle with background noise. That includes the technology used in all kinds of communications systems, which could spark the interest of tech companies and the government.

From Rock Bands to Research

All this research is based in hard science, but Jennings also approaches the field from an artistic perspective. A lifelong musician, he played in the Ute Marching Band, toured with rock groups around the state, and even performed in the 2002 Winter Olympics.

Before joining the faculty, Jennings enrolled as a student at the University of Utah with plans to work in an audiology clinic, but his academic training launched him on a different trajectory that included seven years of clinical and research training at Purdue University. He’s proved to be a prolific researcher, participating in the Vice President’s Clinical and Translational Scholars Program and generating more than $1 million in grant funding during his tenure in the College of Health.

“Music drew me to sound, which drew me to audiology,” he said. “I got into my academic training and professors told me I think about things in a different way. That planted the seed to pursue graduate studies, I discovered I really enjoyed research, and I’ve never looked back.”

By Sarah Shebek